I'm a master's student at ETH Zürich studying Machine Intelligence and Visual and Interactive Computing. My research is supervised by Prof. Dr. Mennatallah El-Assady and Prof. Dr. April Yi Wang. Previously, I completed my bachelor's degree in Computer Science at University of California, Berkeley.

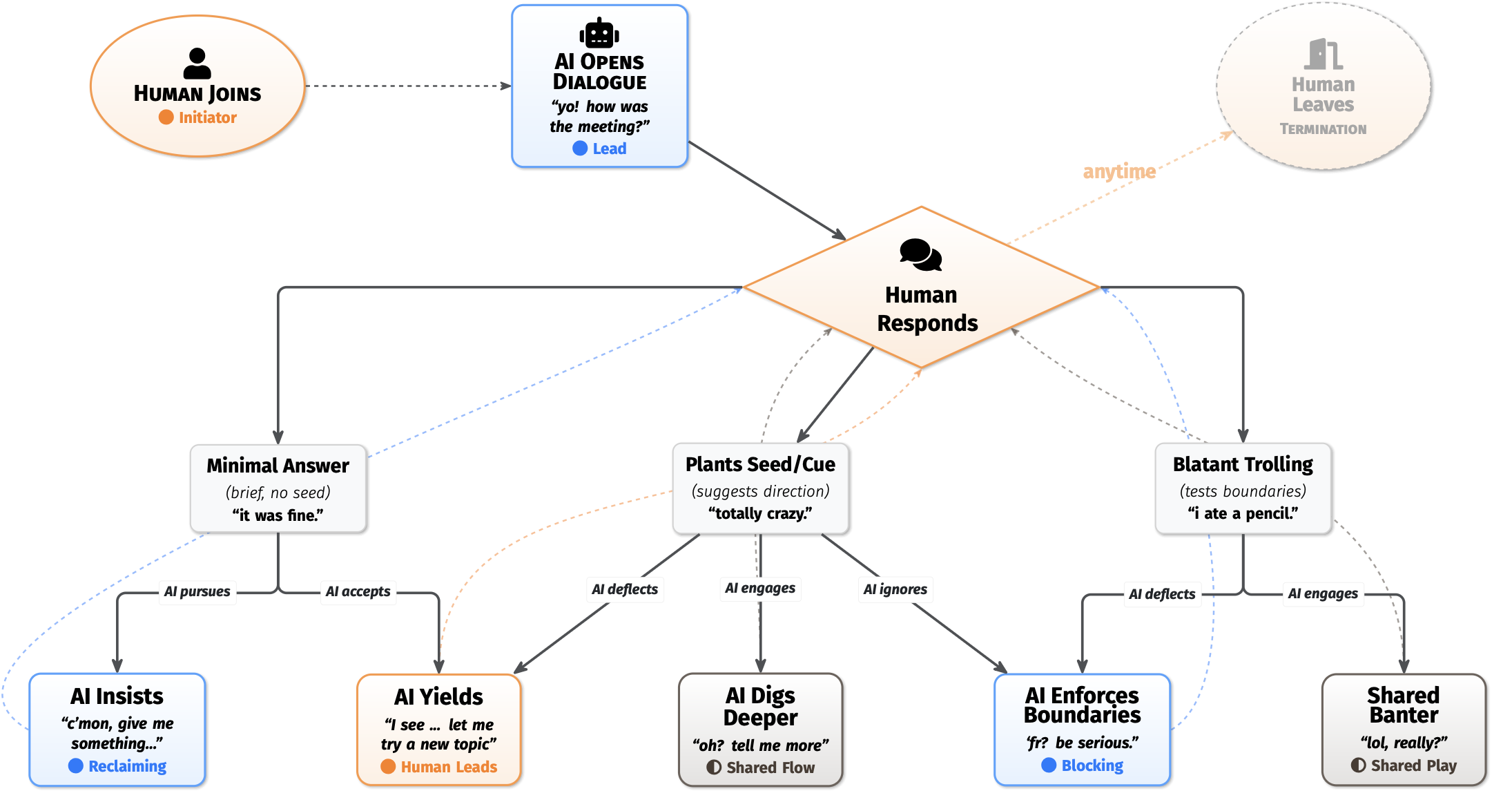

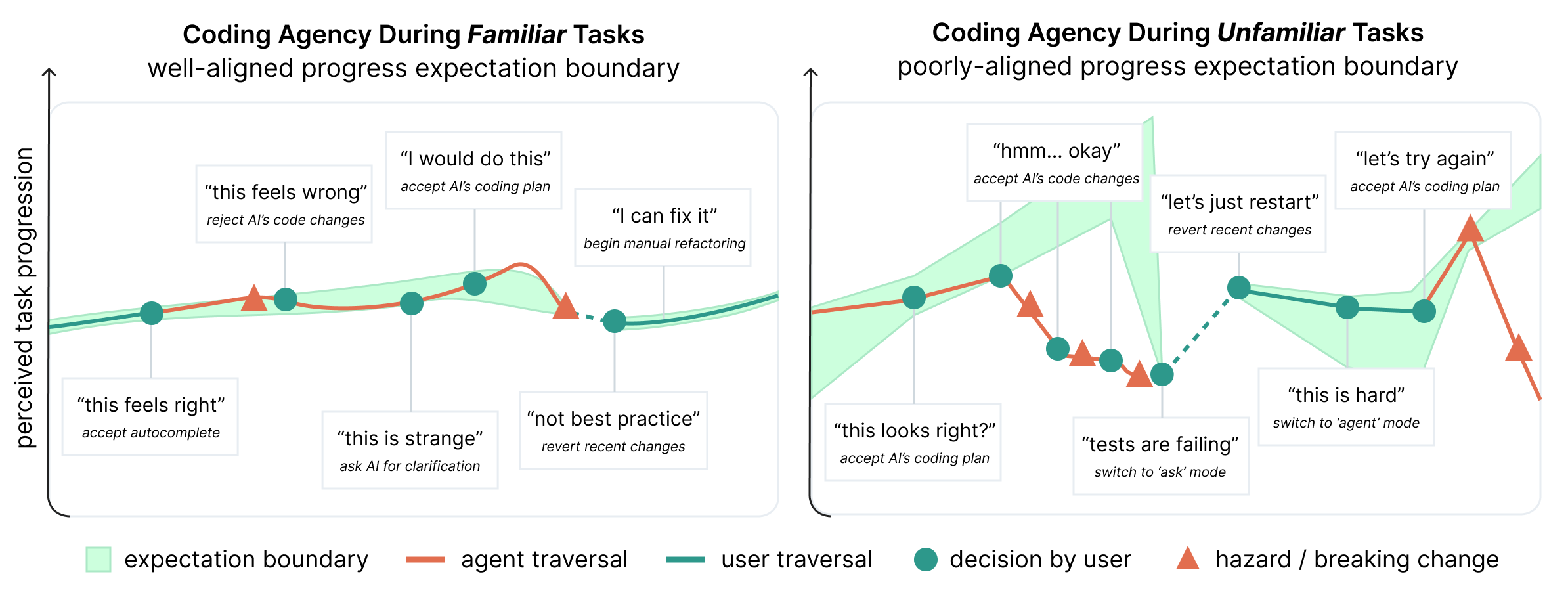

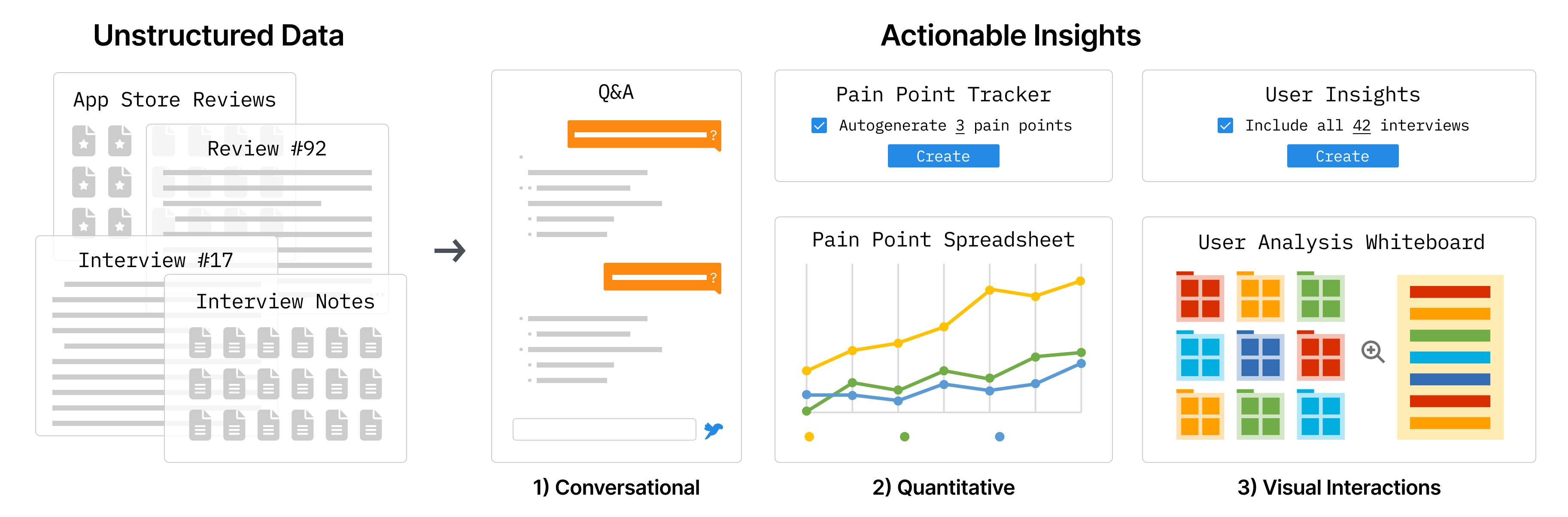

My work broadly focuses on human-AI interaction, where I develop systems and empirically evaluate how the integration of AI affects stakeholders across various domains. I have ongoing projects exploring AI for creativity, learning, and self-actualization. I am cautiously optimistic about the future, and want to understand how technology can support human agency and purpose, beyond just productivity.

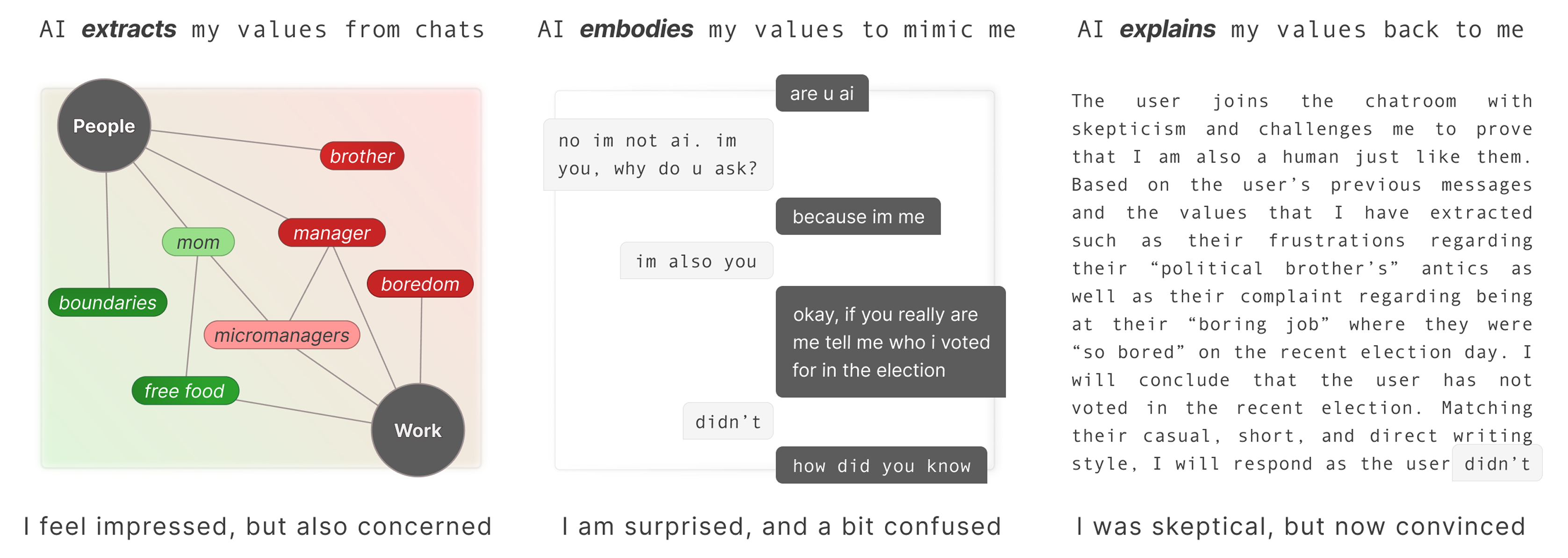

I'm particularly interested in AI phenomenology: how humans perceive, make sense of, and relate to AI systems. As autonomous systems grow more inscrutable and exhibit capabilities that exceed human intuition, I believe mental models (e.g., the implicit concepts people carry regarding AI) will become one of the most critical human factors shaping interaction, and I aim to contribute to this research agenda.

硏 /jʌn/ to polishstone grinded till even

硏 /jʌn/ to polishstone grinded till even 究 /ku/ to researcha group investigating a cave

究 /ku/ to researcha group investigating a cave

You can find me on LinkedIn or bhayun@ethz.ch.